Days after a tornado caused six deaths in Rio Bonito do Iguaçu (Paraná), ultra-realistic images generated by artificial intelligence began to circulate on the networks, along with real records of the phenomenon. At least five of these misleading contents – totaling almost 6 million views – were created with the Sora 2 tool, launched by OpenAI at the end of September.

An investigation by Aos Fatos verified that four out of ten viral videos generated with Sora 2 on TikTok spread misinformation about disasters, politics, public safety or reproduced prejudices.

Since September 30 —when the tool was launched— Aos Fatos identified 64 viral videos created with Sora 2 that, together, have accumulated 262 million views on the platform. Of that total, 26 publications contained some type of misinformation and added 41 million views..

Still in the gradual release phase, Sora 2 makes it possible to create increasingly realistic videos in a simple, fast and cheap way, expanding the possibility of large-scale disinformation in crisis situations.

Exploitation of natural disasters

After the climate disaster that affected Rio Bonito do Iguaçu at the beginning of the month, AI-generated videos began to circulate on social networks that supposedly recorded “the exact moment” of the tornado’s passage. An example is the content below, which includes the Sora 2 watermark.

Other publications show landslides of houses and streets destroyed by alleged floods. The pieces went viral amidst the alerts cyclones tropical storms that affected the country in the first days of November.

In the comments of one of the most popular posts – with more than 10 million views – users are divided between concern and doubt about the veracity of the images.

Police mega operation

In recent weeks, alleged records of the police mega-operation carried out in the Alemão and Penha complexes, in Rio de Janeiro, also went viral. Part of the verified materials by Aos Fatos was generated with Sora 2.

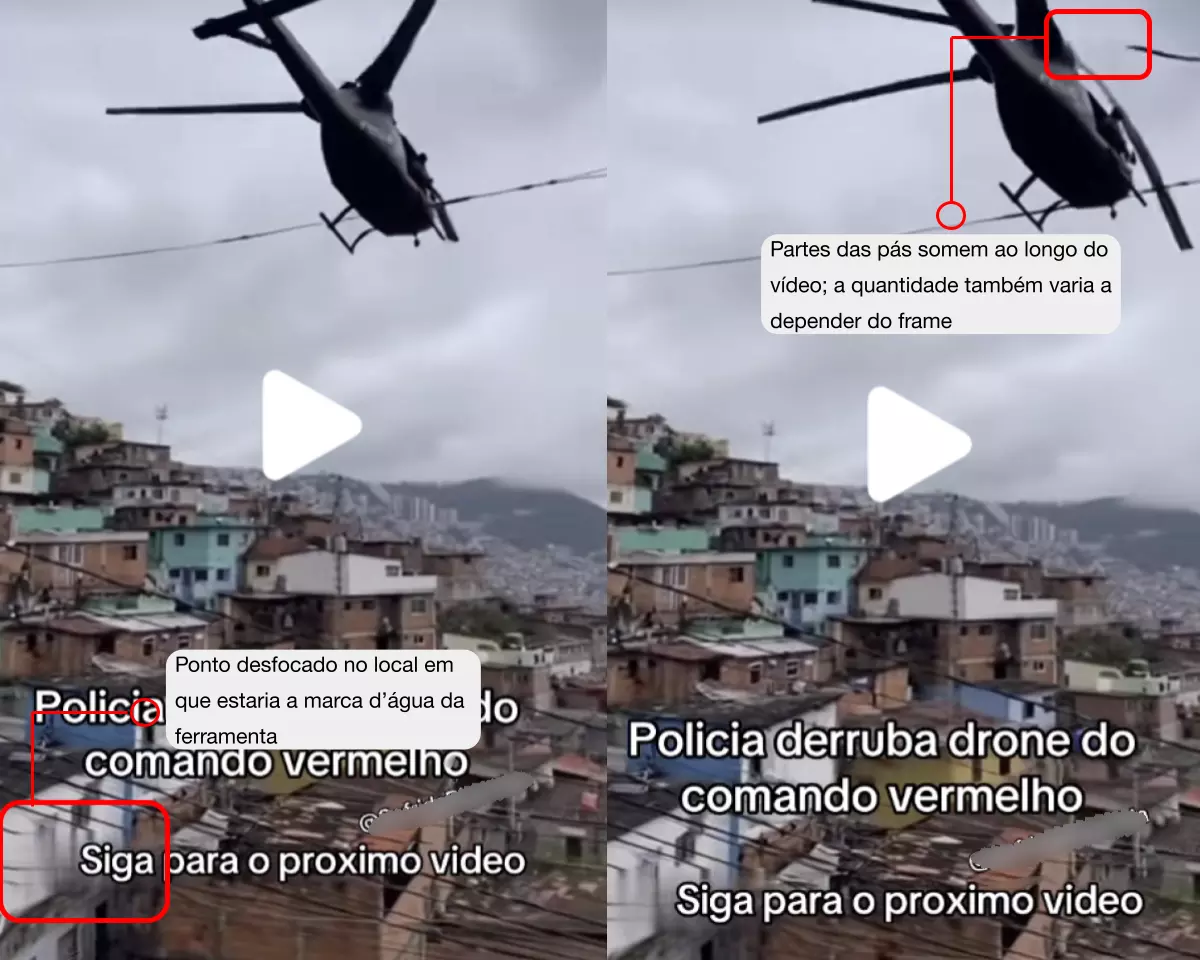

One of the artificial videos, for example, shows the alleged moment in which a security forces helicopter shoots down a Red Command drone. Although organized crime effectively used adapted drones to throw explosives against the police, the recording is false.

In this case, although the authors of the publications they erased the watermark that identified the content as generated by the tool, it was possible to detect it by several common clues in images created with AI:

- The size of the erased watermark and its movement on the screen, typical of Sora 2.

- The length of the video, which matches the limit of the free version (up to 10 seconds).

- The hyperrealism of the images, characteristic of the tool.

- Other features of synthetic images, such as proportion errors or plasticized textures.

Other cases of misinformation

In addition to the videos about the operation in Rio, Sora 2 is also used to produce pieces that take up already known misinformation.

One of the contents identified by Aos Fatos shows an alleged dialogue between a thief and a police officer. In the video, the criminal claims to have stolen a cell phone to “have a beer” and says that he received authorization from President Lula Da Silva to commit the crime.

The content refers to a frequent misinformation associated with the president: An edited video that mixes and decontextualizes fragments of a 2017 interview to make it believe that Lula would have supported robberies.

Trivialization and everyday effect

Most of the videos in the survey show talking animals or sports scenes. Although they may seem harmless, specialists warn that the popularization of this AI-generated content creates an environment that is difficult to navigate.

Rogério Christofoletti, professor of journalism at the Federal University of Santa Catarina (UFSC), views the advance of tools like Sora 2 with concern. He maintains that the more realistic these videos become, the more they resemble the visual repertoire that we accumulate throughout our lives, which confuses us.

“If they repeat patterns that we have incorporated as true, they begin to convince us that they belong to our same set of memories. In other words, we got confused,” explained the researcher.

For him, this confusion is dangerous because it affects our judgments and actions: people can be deceived or suffer harm believing that synthetic content is real.

On the other hand, researchers point out that frequent contact with artificial videos can stimulate the public’s critical thinking. A study with American adolescents showed that 72% changed the way they evaluate online information after coming into contact with false or misleading content—generated by AI or not.

For Athus Cavalini, doctoral student in computing and professor at the Federal Institute of Espírito Santo (IFES), contact with more banal publications is key to developing this criterion.

“Misinformation in serious and polarized contexts has a strong emotional component. Many times people believe something because they want to believe it. In more trivial situations, people are more open to questioning whether something was created by AI,” he explained.

Lucas Bragança, doctor in communication from the Fluminense Federal University (UFF), emphasizes that technologies advance rapidly and there is still no solution for their impact on the integrity of information.

Still, he considers it possible to cultivate a “healthy culture of distrust,” because the public will have to evaluate more carefully before believing information.

Transparency

Although Sora 2 and other tools include watermarks in generated content, many creators choose to remove or hide these signals, making it difficult to identify the origin.

More skilled users can also employ other tactics, such as intentionally compressing the image to hide imperfections or adding noise to simulate amateur videos.

Cavalini explains that developing techniques to detect AI use is complex. More advanced proposals include invisible watermarks, such as digital signatures.

That would allow platforms and tools to work together to automatically tag content. Today, in most cases, identification depends on the creator’s decision.

“There are technical means to insert digital signatures and guarantee the traceability of the content. But there is a problem: the signature has to be open so that social networks can use it,” argues Cavalini.

For Bragança, it is important to start a debate on the regulation of the identification of the use of AI, because it is not possible to stop the advance of these technologies. It will be increasingly difficult to distinguish synthetic content with human eyes alone, and the goodwill of big tech cannot be relied upon alone to ensure accountability.

Christofoletti maintains that it is necessary to move towards transparency protocols and standards in a multi-sector, democratic and public regulatory framework. The rules, he affirms, should not be defined only by the State: they should include academia, society and companies.

“They are enormous challenges, yes. But these companies are used to creating wonders. Why wouldn’t they help build a more transparent and ethical future for everyone?” he questions.

The other side

Aos Fatos contacted OpenAI and TikTok to comment on the survey. In a note, the owner of Sora 2 stated that the tool was developed to expand access to audiovisual creation resources based on AI and that its launch prioritized responsible implementation.

OpenAI highlighted that the model includes security measures to reduce misuse, such as restrictions on creating images of real people without consent, mandatory watermarks and C2PA metadata on all generated videos, as well as detection and traceability systems.

According to the company, these actions integrate an ongoing commitment to transparency and security, which also includes rules that prohibit deceiving users through impersonation or fraud. Violations, he added, can lead to removal of content or sanctions on the platform.

TikTok, for its part, sent a link to its integrity and authenticity guidelines, that prohibit the publication of manipulated content with the purpose of misleading the public “about matters of public importance or harmful to people”, and require creators to label content “that shows scenes or people with a realistic appearance.”

According to the platform, unlabeled content may be removed, restricted or flagged by the TikTok team, “depending on the damage it may cause.”

The company also reported that it analyzed the Aos Fatos survey and eliminated the violating content. In addition, he reinforced that TikTok publishes moderation reports quarterly and that, according to the latest report, 97% of the videos that violated the Integrity and Authenticity policies were proactively removed, and 86% of them before they had any viewing.

The platform maintained that its approach “combines technology with human review to identify and remove materials that may violate the Community Guidelines,” with a team “of more than 40,000 professionals dedicated to security, including Brazilian moderators.”

This note was originally published in the middle Aos Fatos.