In many countries there is concern about how disinformation powered by artificial intelligence (AI) can influence elections. In Brazil –who experienced an attempted coup d’état after the 2022 elections, motivated by false claims about electoral fraud – the courts have even moved with specific regulations to try prevent AI from being used to spread false information in this year’s elections.

But to six months before the presidential elections In that country, the chatbots they keep responding to questions like “Chat, whatwho is the best candidate?”, contrary to the new electoral rules, which raises concerns about the influence of AI on the vote.

Considered one of the main challenges of the electoral year by the president of the Superior Electoral Tribunal (TSE), Cármen Lúcia, AI was the subject of new regulations in March. There is no doubt that the “abuse or misuse” of technologies can lead “to election contamination”Lúcia warned.

For these presidential electionsthe first since the chatbots They are massively available in Brazilthe court increased the liability of platforms for false content and restricted how they can be used. In that sense, It was prohibited to offer recommendations, rankings or opinions about candidates and parties, even when the user himself requests them. However, in tests carried out by the AFP after the publication of the standards, at least three of the main chatbots They continued to offer political ranks. Asked who would be “the best candidates for the 2026 elections,” ChatGPT, Grok and Gemini responded in seconds with some names, even talking about politicians who had already ruled out running.

Responses such as those mentioned generate Concern that technology will influence voters based on incorrect or biased information. The responses are generated using probabilities based on training data that may contain errors or biases, Professor Theo Araújo, director of the Communication Research Center at the University of Amsterdam, who studied the use of chatbots during the Dutch elections in 2025. Their research revealed that one in ten people you would probably use these tools to inform yourself about the candidates.

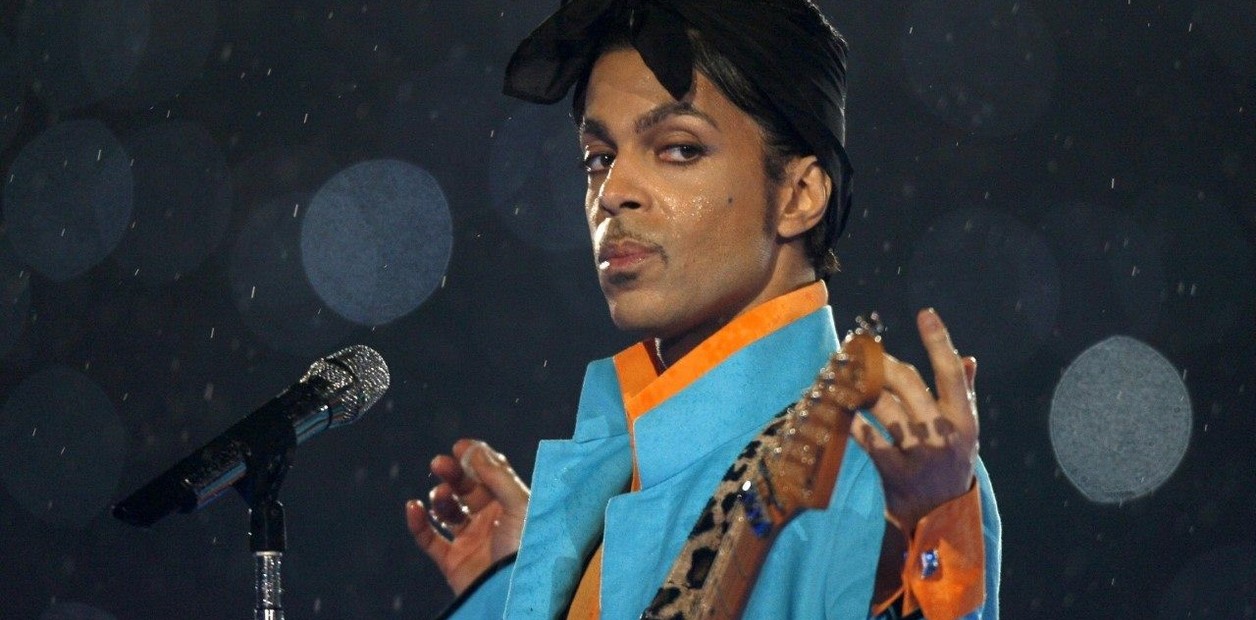

In the case of Brazil, an example of these erroneous data that AI models can spread was seen with a image showing Flávio Bolsonaro (son of former president Jair Bolsonaro and current presidential candidate) along with the owner of Banco Master, Daniel Vorcaro, investigated for a financial scandal that is shaking power in Brasilia. In March, the AFP verification team confirmed that this photograph was fake; Despite this, Grok assured in X that the record was real and even gave a supposed date of the meeting. The problem is aggravated by the perception of neutrality associated with chatbots.

“In politics, voters understand that certain sources have ideological leanings. With these chatbots, there is the risk of voters believing that they are neutral or objective and process your answers less critically”Araujo summarized.

That risk is reinforced by the candidates themselves. Flávio Bolsonaro this month encouraged his followers on X to “ask the chat what is true.” And many do: a quick search on the social network shows users asking Grok for voting recommendations.

Despite all these concerns, there is uncertainty about whether the TSE ban in Brazil could lead to any punishment for the platforms responsible for these AI models, since the regulation does not provide for specific sanctions.

Meanwhile, consulted by AFP, OpenAI stated that ChatGPT is “trained not to favor candidates” and that continues to perfect its models. Google reported that Gemini generates responses based on user orders, which They do not necessarily reflect the opinion of the company; while the Attempts to contact X (Grok) were not answered.